Even a perfectly unbiased model lands in the wrong bucket on Polymarket, often. That's not a flaw in the model. It's the very nature of the atmosphere.

In the first post of this series, I laid out a paradox: forecasting within 1°C nine times out of ten, and still losing. Many of you wrote back with the same question: "OK, but why?"

The answer fits in one sentence: the natural uncertainty of a forecast is often wider than a Polymarket bucket.

What "forecasting" really means in meteorology

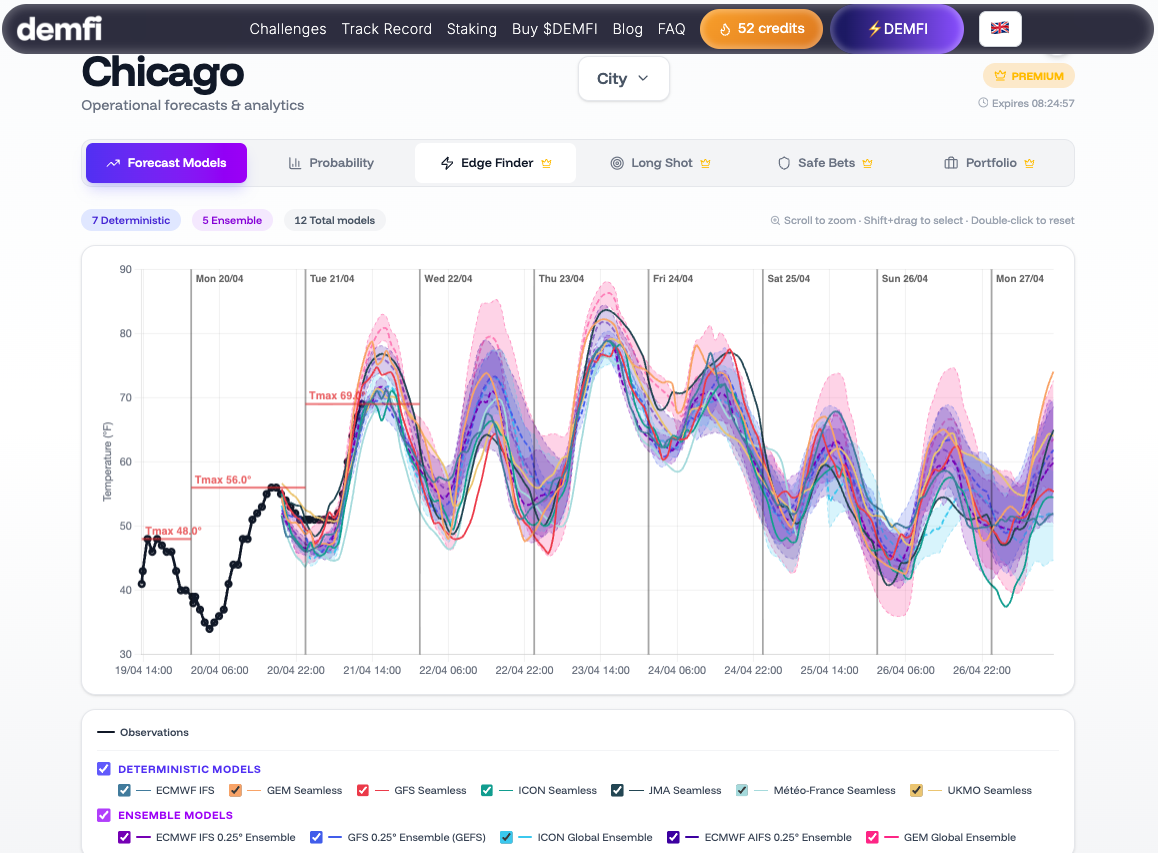

A temperature forecast isn't a number. It's a probability distribution. When a model tells you "tomorrow, 24°C in Chicago," what it really means is something like: "with what I know this morning, the most likely temperature is 24°C, but 23°C or 25°C are still very plausible, and 22°C or 26°C aren't ruled out."

That uncertainty isn't a weakness. It's a physical property of the atmosphere. Edward Lorenz formalized it in the 1960s: two nearly identical atmospheric states can evolve into very different states a few days later. That's the butterfly effect, and it has a very concrete consequence for us: you will never be able to fully remove the uncertainty from a weather forecast. You can reduce it. You can't cancel it.

In concrete terms, for maximum temperature at 24h lead time, natural uncertainty is typically on the order of 1 to 2°C. At 3 days out, closer to 2 to 3°C. At 7 days, sometimes 4°C or more.

Why this is a problem for Polymarket

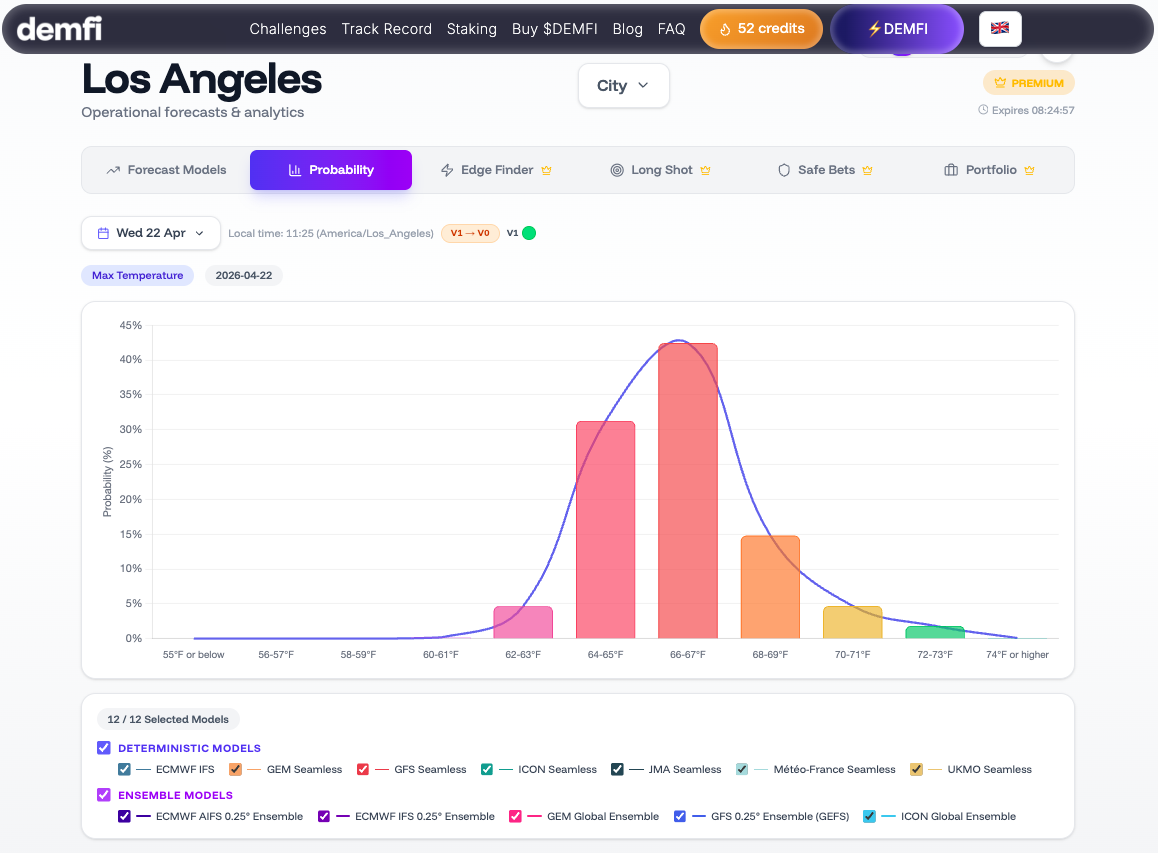

Look at a maximum-temperature market on Polymarket. Buckets are often 2°F wide in the US — or 1°C elsewhere.

Now overlay ~1.5°C of natural uncertainty at 24h on those buckets. What you get is that an honest forecast almost never assigns 100% probability to a single bucket. It spreads, say, 55% on the central bucket, 25% on the one above, 15% on the one below, and the rest on the extremes.

In other words: even with the best model in the world, even with no bias at all, the "most likely" bucket typically has a 50 to 60% chance of coming out at 24h. Not 95%. Not 90%. Fifty to sixty.

That changes how you read a forecast entirely. "The model says 24°C" doesn't mean "the 23–25°C bucket is going to hit for sure." It means "the 23–25°C bucket is the most likely, but there's a serious chance — often one in three — that the outcome lands in the bucket next door."

Uncertainty is information, not noise

Here's the point that changes everything: the spread between models — what we call ensemble dispersion — is itself information.

When the 150 to 200 forecasts we process every day are all tightly clustered around the same value, the forecast is confident. When they diverge, the forecast is uncertain. This isn't uncertainty "because of missing data" — it's physical uncertainty, the atmosphere's own.

That dispersion is measurable. It changes every day, every hour, every city. On a stable winter morning, models agree to within 0.5°C. On a stormy-front morning, they can spread 5°C apart.

A bettor who ignores that dispersion takes the same position in both cases. A bettor who reads it doesn't.

What this means for your decisions

Three concrete consequences:

-

The "central" bucket is never the truth. It's the mode of a distribution, not the outcome. If its price on Polymarket is above its true probability — say 70% when the distribution only supports 55% — it's a bad bet, even though it's the "most likely" bucket.

-

Uncertain days aren't days to avoid. They're days when information has more value. Neighboring buckets become attractive, and portfolio strategies (YES/NO across several contiguous buckets) can beat a bet concentrated on the "obvious" bucket.

-

A systematic 2°C bias is far more damaging than 1.5°C of natural uncertainty. Uncertainty you manage with position sizing and portfolio construction. Bias makes you lose systematically. That's why bias correction (our V1) is non-negotiable — but that's the subject of the next post.

What's next

In the coming weeks, the series continues:

- How to measure a weather bias — and why it's the first thing our V1 does

- Why the "most likely" bucket isn't always the most profitable — the link between probability and price

- How to build a coherent weather-bets portfolio — risk management applied to the weather

The goal remains the same: give you access to the scientific rigor we bring to the problem, so you can build your own strategy.

Stay sharp,

— JP