An archer can group all ten arrows inside a ten-centimeter circle. That's a precise archer. But if all ten arrows are clustered thirty centimeters to the left of the bullseye, he wins no tournament. He's precise — not accurate.

A weather model can have exactly the same pathology. And on Polymarket, the archer who keeps missing to the side drains your wallet silently.

In the first post I laid out the paradox; in the second we talked about the natural uncertainty of a forecast. Today, we take on the other face of the problem: systematic bias. The one your eyes don't catch and that makes you lose every single day.

Precision is not accuracy

The metric everyone watches is the MAE — the mean absolute error. It's an honest number: it tells you how far, on average, the forecast lands from the truth. An MAE of 1°C means "on average, the model is off by one degree." That's useful. But it's incomplete.

Because the MAE doesn't tell you which side the model misses on.

Bias, on the other hand, measures exactly that: the average offset. A model with a bias of -1°C is a model that systematically calls one degree lower than reality. Not randomly. Not half the time. Every time.

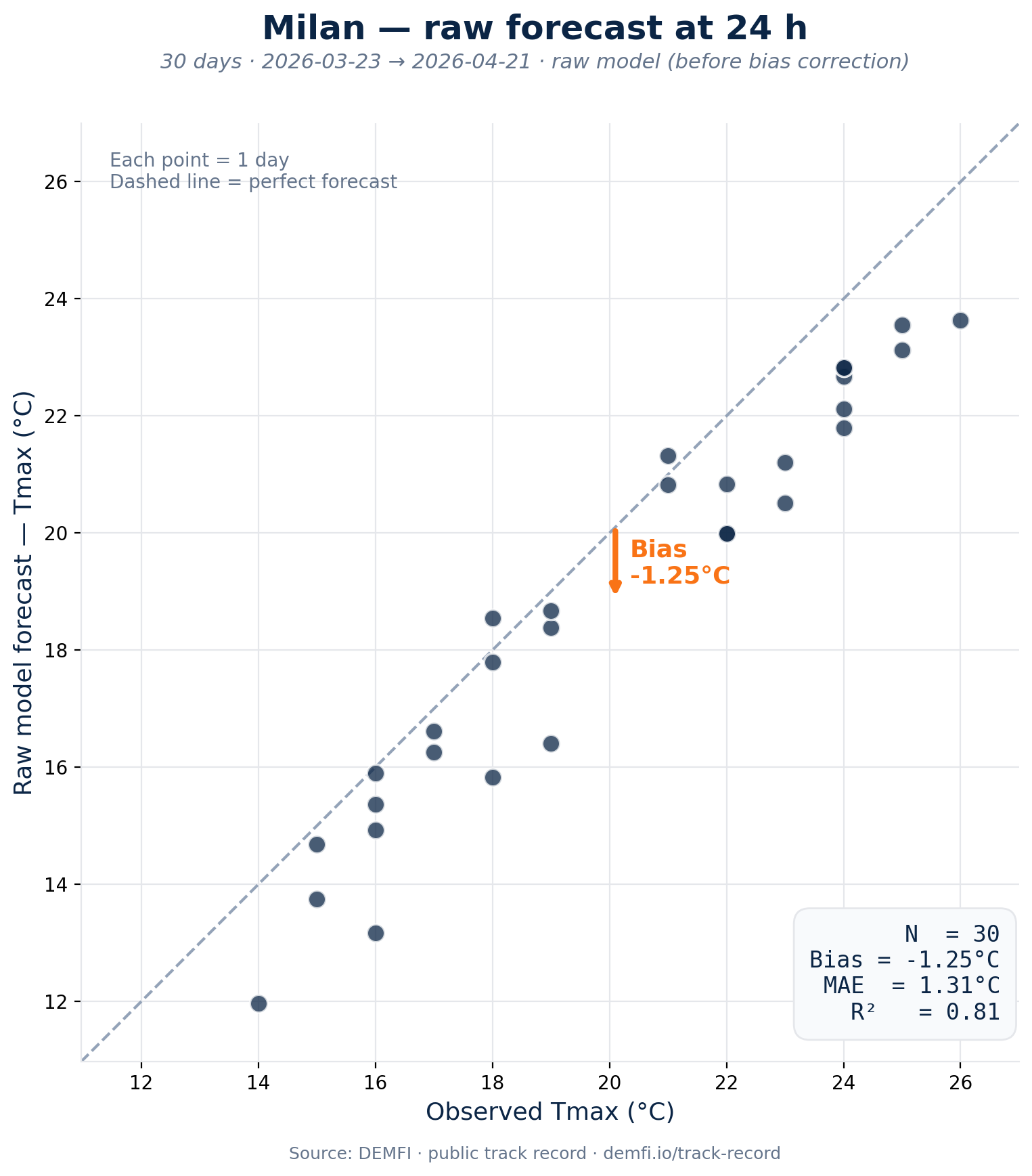

The Milan case

Look at Milan over the last thirty days. Raw 24-hour forecasts have an MAE of 1.31°C. On paper, that's decent. Half the world would say "the model is fine." Except its bias is -1.25°C.

In other words, almost all of the MAE error comes from the same side. The model isn't flipping between "too warm" and "too cold": it calls too cold, nearly every single day.

On Polymarket, that translates very concretely. If reality is going to land at 22°C but the model says 20°C, the bettor following the raw model buys the 20°C bucket (or sells NO on the 22°C one, thinking it's unlikely). They take the wrong position, every day, on markets where buckets are 1°C wide. It's no longer a one-off miss. It's a daily, silent leak hiding behind an "acceptable" MAE.

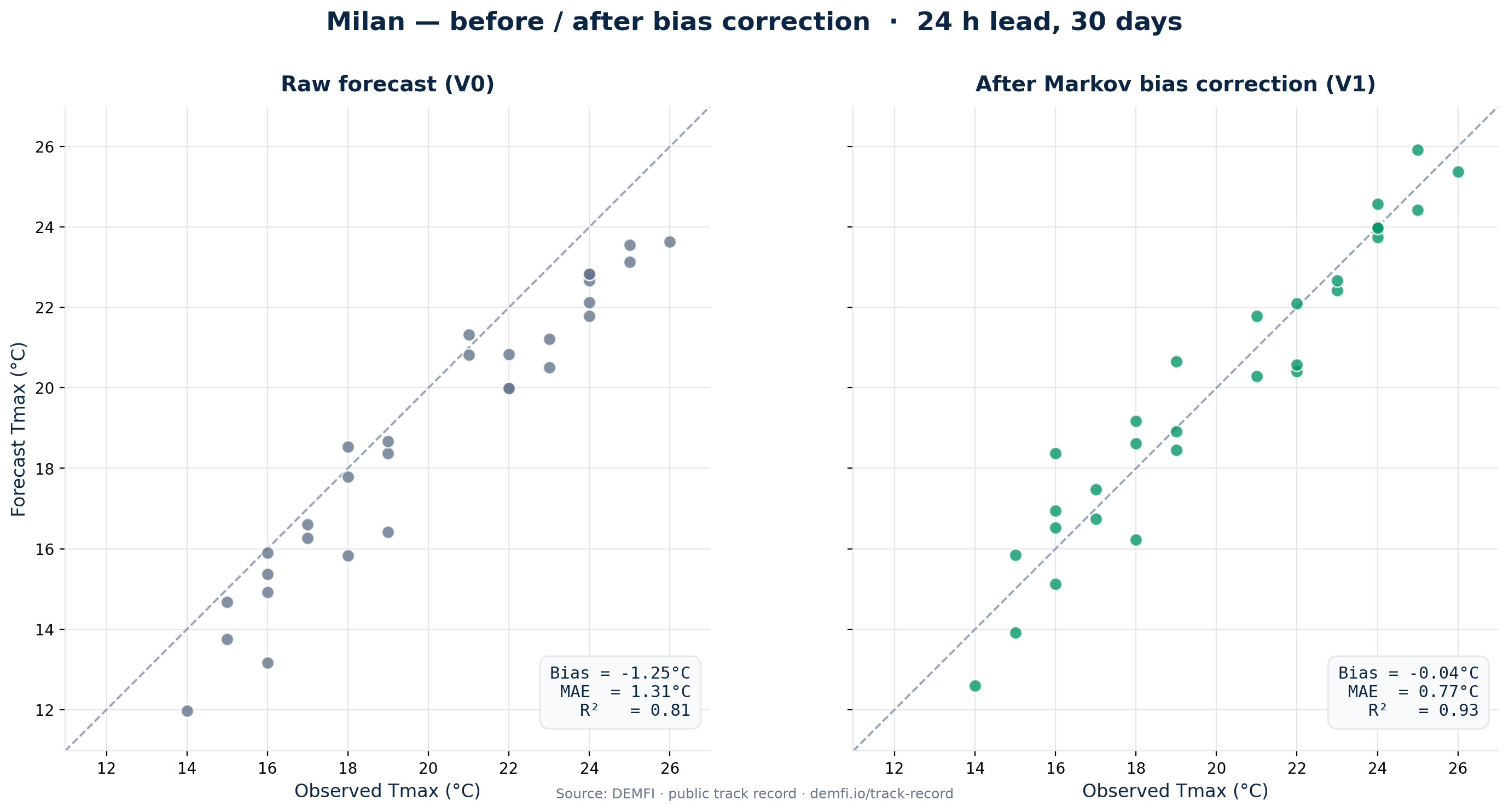

How we correct it

Our V1 correction doesn't trust raw forecasts. Each morning, we anchor the day's forecast on yesterday's observation, and we straighten the bias in real time, city by city, model by model. It's not a black box: it's a simple, auditable geometric correction.

On Milan, the result is unambiguous.

The cloud snaps back onto the diagonal. The bias drops from -1.25°C to -0.04°C — to three hundredths of a degree, it's effectively gone. The MAE falls from 1.31°C to 0.77°C, a 41% cut. And the R² (the correlation) climbs from 0.81 to 0.93.

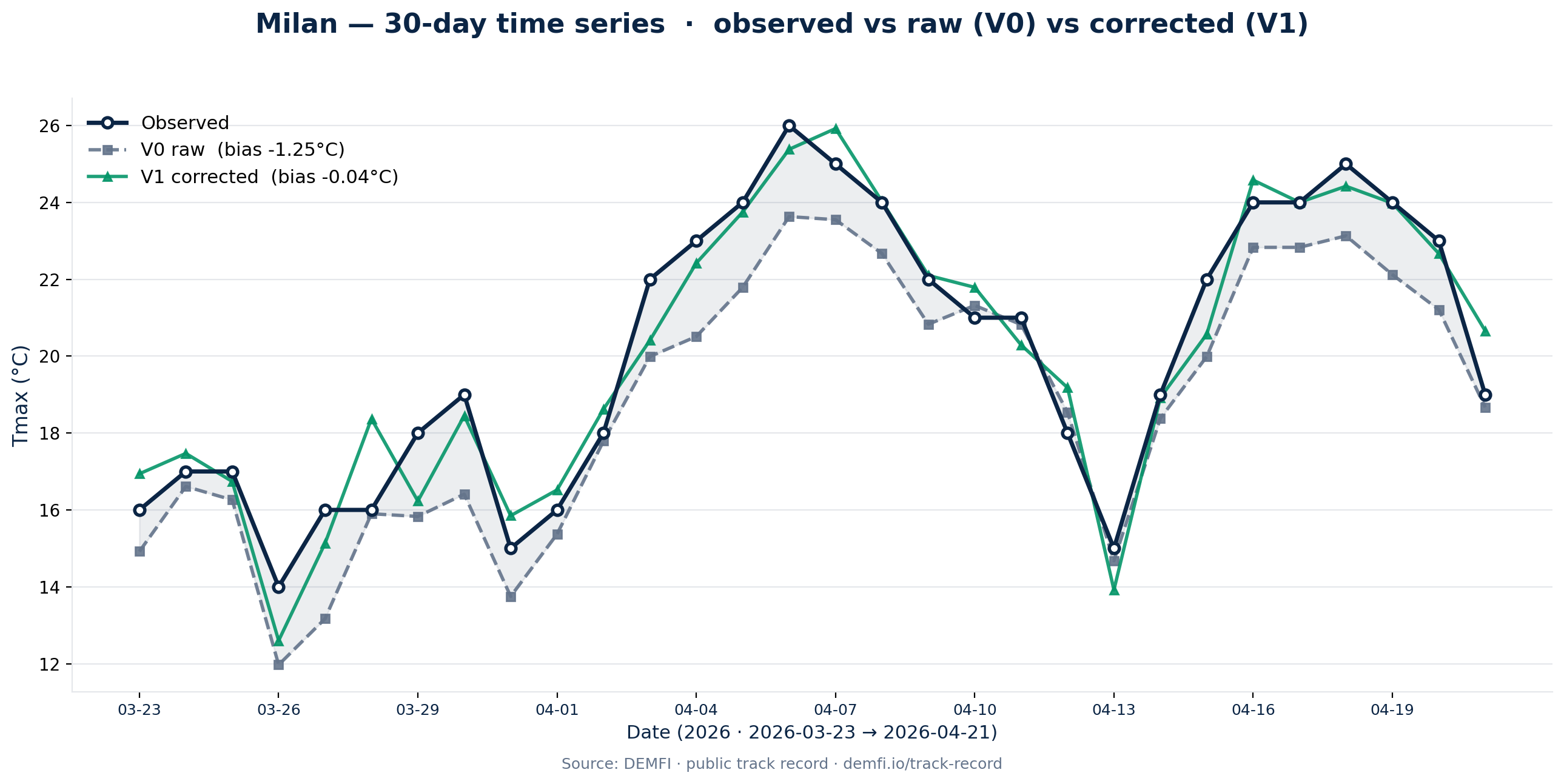

The most telling view is the thirty-day time series:

The raw forecast lives beneath the observed curve from day one to day thirty. The corrected forecast oscillates around it, as it should. The archer has stopped pulling left.

What comes next

Correcting the bias is the entry ticket. Not an option, not a "premium feature." It's the first thing we do before we look at any Polymarket price. But it's not the last.

Because even with a perfectly unbiased forecast, the most likely bucket isn't always the most profitable one to buy. That's the subject of the next post.

Happy analyzing,

— JP